Modulo is not a GitHub bot

Most people who land on fixbugs.ai still think we fix GitHub issues. That framing is wrong, and it's our fault. This is where debugging actually happens — the IDE, the issue tracker, the observability pipe — and what a debugging agent has to see to be useful.

Most people who land on fixbugs.ai still think we're a GitHub bot. That's our fault — we shipped the GitHub app first, the long-tail pages rank for "fix GitHub issues with AI," and the first demo everyone sees is a screenshot of a pull request.

The actual product is a debugging agent, and the reason most coding agents — Claude Code, Cursor, Copilot — are bad at debugging is that they live in the one place production bugs don't originate: the editor.

where bugs actually live

Think about the last real bug you debugged. Not a failing test. A real one. The one that took six hours and three engineers, and the fix landed in a service nobody on the call had touched that quarter.

It started as a schema change. Shipped forty minutes earlier, slipped past canary because the failure mode only shows up under a specific tenant's query pattern. Downstream, a retry storm; further downstream, an SLO burn on a service two hops removed from the actual fault. By the time anyone is looking at Grafana, you have a 40MB log file, a Jaeger trace with 180 spans, a Sentry issue with three stack traces that look similar but aren't, and a Jira ticket from a customer that landed in a Linear inbox because the product team's routing is different from yours.

The bug is nowhere in the editor. The bug is distributed across five tools and three teams. The editor is where you'll eventually write the fix, but you don't know what to write yet — you don't even know which service is at fault.

This is what coding agents miss. They're exceptional at "here's a file, add a feature." They are structurally blind to "here's a burning pager, tell me what's wrong." A coding agent sees the editor. An oncall engineer sees the production symptoms. Those are different worlds, and a tool that only sees one of them can't close the loop on the other.

five things we do that coding agents structurally can't

These aren't "nice to have" deltas. They're the places where the coding-agent shape of the problem breaks down and a debugging agent has to do something different.

Context you don't have to assemble by hand

The coding-agent contract is "paste the relevant thing in, I'll generate code." That contract breaks on a real incident, because the relevant thing is thirty relevant things — OpenTelemetry traces in Jaeger, logs from Datadog or Elastic, Sentry error groups, Grafana panels, the Jira thread, the screenshot QA filed, the video loom from the customer, the commit history of the two suspect services, the last four deploys. Nobody is pasting that into Claude Code. By the time you've collected it, the alert is an hour old.

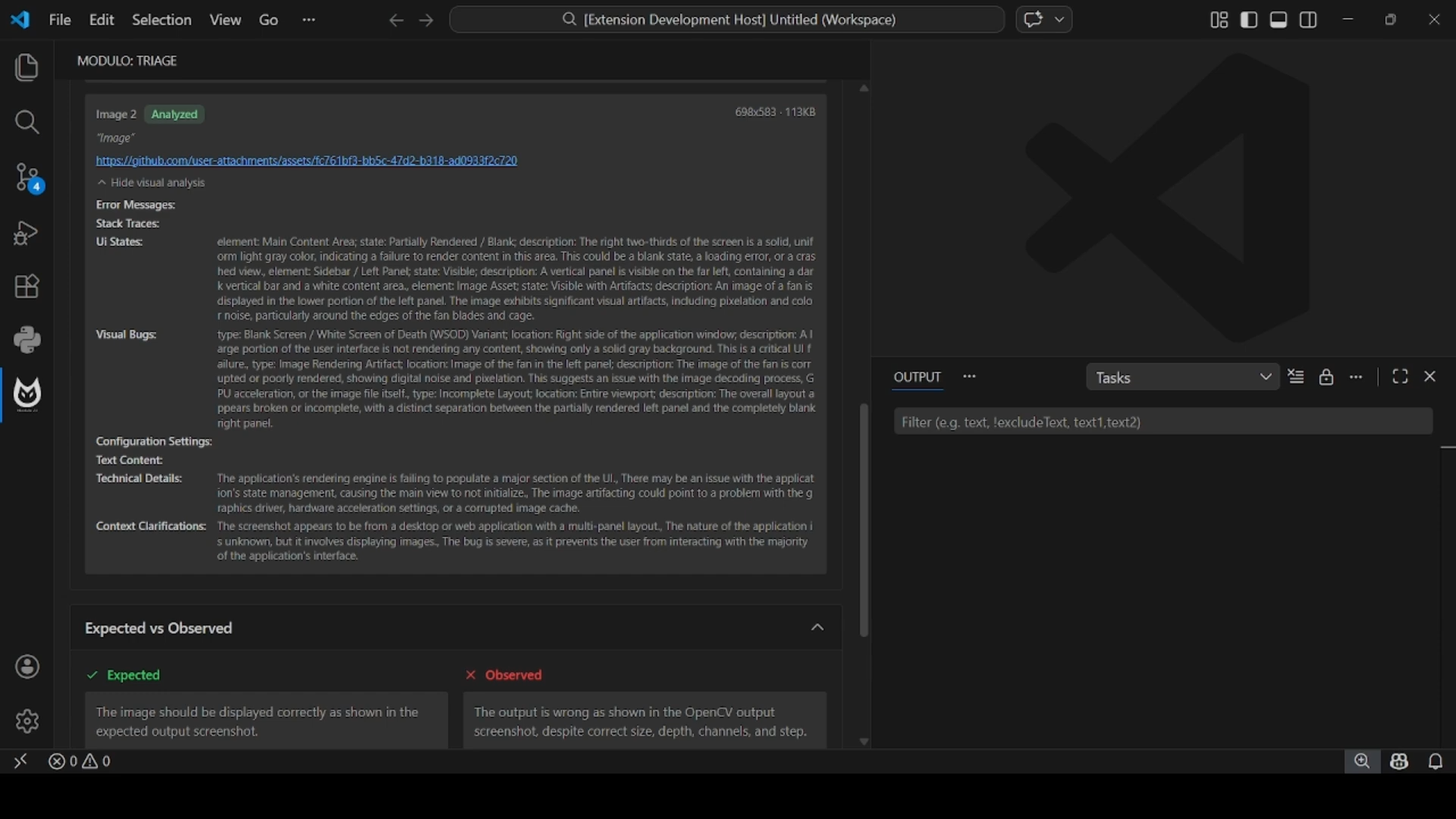

Modulo fetches it. The agent pulls logs, traces, metrics, issue comments, historical context, images, and videos on its own, writes its triage state back to the bug, updates the comment thread as it reasons, and opens the PR when it has something. You describe the symptom; it assembles the room.

Context that doesn't blow past 200K tokens

Cursor and Claude Code cap out around a 200K window. My VSCode extension's debug logs alone overwhelm Claude Code's context on a bad day. A single production log file routinely clears 40MB. You can't fit that in one prompt, and chunking it naively produces agents that reason about the first page and confidently hallucinate about the rest.

Modulo runs a swarm: specialized agents chunkify the evidence contextually — by trace span, by log window, by service, by suspect deploy — reason locally, and reconcile their findings. Unlimited context isn't a token-count flex. It's what happens when you stop trying to cram the whole incident into one model call.

Fixes that come with proof they work

The default coding-agent output is a diff and a vibe. You trust it or you don't. Modulo's output is a diff plus a reproduction test case that fails on the broken code, passes on the fix, and leaves the rest of the suite green.

If the agent can't produce that evidence — can't reproduce the bug, can't show the fix removes it — the fix doesn't ship. "Validated fix" isn't a marketing slogan; it's the floor. A hypothesis without a reproduction is a guess, and guessing in production costs money we can measure.

A triage session that isn't trapped on your laptop

Cursor and Claude Code sessions are per-machine. The context lives in your local chat history. The engineer who picks the investigation back up after a handoff gets none of it — they start over, re-deriving what someone else already figured out an hour earlier.

Modulo's triage sessions are shareable artifacts. You post your session to the bug; another engineer loads it in their VSCode and takes the investigation from where you left off, with the same hypotheses, the same evidence ledger, the same rewind points. Debugging is a handoff-heavy activity. Most tools pretend it isn't.

A conversation you can rewind

In a coding-agent chat, once the model goes down a wrong path, the path is in the context forever. You either live with the bias or start a new session and lose your state.

Modulo versions the conversation and the artifacts it generates. When the agent drifts — commits to the wrong hypothesis, proposes a fix that patches a symptom instead of the cause — you rewind to the last good point and try a different branch. This matters more than it sounds. Debugging is not linear. Pretending it is produces tools that feel great in demos and useless in the middle of an incident.

None of these are features bolted onto a coding agent. They're what the shape of the tool has to be if debugging, not code generation, is the load-bearing use case.

alert triage is not ticket creation

A lot of "AI SRE" tools stop at diagnosis. An alert fires; a bot posts a summary to Slack; a human does the rest. That's a nicer PagerDuty, not a debugging agent.

Our model is different. An alert — a PagerDuty page, a Sentry regression, a Datadog monitor, a webhook from your APM — kicks off a triage session. The agent investigates the way a senior SRE would: reads the relevant spans, pulls recent deploys, correlates the timing, diffs the suspect services, proposes two or three competing hypotheses, ranks them by evidence. What lands in your Slack is not "something is broken, check it out." It's "here are three plausible root causes, here's the evidence for each, here's the one I'd investigate first, and here's a reproduction test case for the top hypothesis."

The five properties above are what make that workflow possible. Auto-gathered context is what lets the agent investigate before a human shows up. Unlimited context is what lets it actually read the 40MB log file instead of guessing at the first page. Fix validation is what lets it go from "I think it's this" to "here is the evidence it's this, and here's the diff that removes it." Shareable sessions are what let the next engineer pick up without a context transplant. Versioning is what lets any of them walk the agent back when it's wrong.

what this means for you

If you're on an oncall rotation in a distributed system, the question worth asking is not "does this tool write code?" It's "where does this tool live, and what does it see?"

If it only lives in the editor, it can help you write the fix once you've already figured out what's wrong. Useful, but small.

If it lives in your issue tracker, your observability pipe, your alert router, and your editor — and it carries the same evidence, the same hypotheses, the same rewindable state across all of them — it can meet you at the moment the page fires and walk with you through the whole loop. That's the difference between a coding agent and a debugging agent. That's what we're building.

The GitHub app is still there. It's a great front door. But if that's the only part of Modulo you've seen, you've seen about fifteen percent of the surface area. The real product is the one that turns up when the pager fires, in whatever tool is open at the time.

We're still early on a lot of this. There's real incremental work to do before we're at feature parity with coding agents on non-debugging tasks, and we're honest about that — Claude and Cursor have capabilities we don't. Our issue-tracker coverage is uneven across surfaces. Observability ingestion is strongest for OpenTelemetry and Datadog; Sentry and Grafana are well-supported but still maturing. Self-hosted deployment works; there are rough edges. If you're running a team of 20–150 engineers in a cloud-native stack and you feel the giant-log-file problem as a specific pain, we'd like to hear where we land and where we don't.

That's the honest pitch. Not "a GitHub bot."